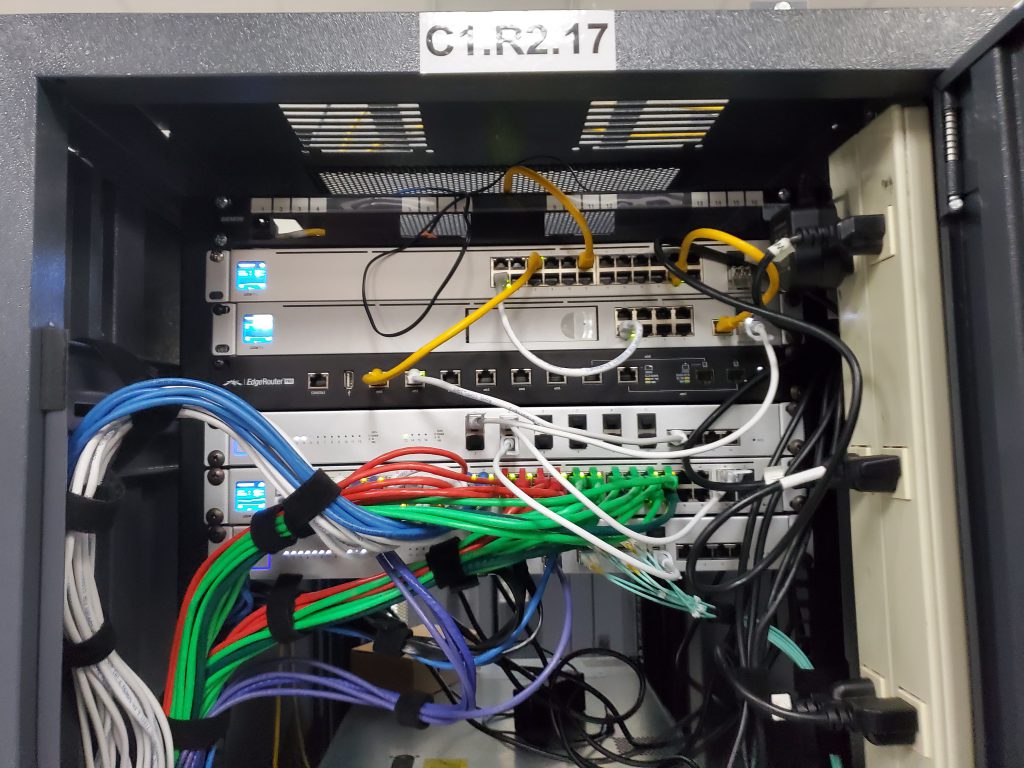

Recently we upgraded the networking in our CoLo from our existing horrible, not all the features work correctly, bought off eBay NetGear switches to a brand new (actually purchased new) Ubiquiti network stack. We went with Ubiquiti because they have a really good reputation, they have a fantastic price point, and the UI is really simple to use while giving us all of the features that we were looking for.

Like any good IT deployment, we hit a snag when we were pushing out out network configuration. All of our servers have 10 Gig network cards in them, and our SAN also has 10 Gig network cards for our NFS shares (we are a VMware vSphere shop), so we have a storage network. We also wanted to put our VMs on the 10 Gig cards, as they were on 1 Gig ports before and we wanted them to have more bandwidth available to them.

In the UniFi software on the Ubiquiti equipment has two different networking setups. The base network which we setup as our management network. Then any other subsets that need to be setup are configured, but they require a VLAN to be configured. We had a few networks to setup, and those were our Infrastructure network which we gave a VLAN of 4 to, our Storage network which we gave a VLAN of 5 to, and our lab which we gave a VLAN of 100 to.

Our VMware servers all have a dedicated NIC which we are using for our Management ports, so we didn’t need to have the Management network be accessible from the NIC that the VMs were going to use. Within the UniFi software I created was is called a port profile which can contain a variety of subnets. This way a single switch port can be on multiple subnets, which was exactly what I wanted. I wanted the 10 Gig ports and their NICs to be on the Storage, Infrastructure, and Lab networks. So I created a single port profile with all of these subnets in it. As you can see from the screenshot below, when you do this you select a netive network for the port profile.

After I got this setup, I was getting weird responses from the VMs and the VMware hosts that were trying to talk to the storage. I put VLAN Ids in VMware or all these networks as well, but things still working talking correctly.

It turns out, that whatever network you have configured as the native network, within VMware this means that you don’t put a VLAN ID for it. So in my case the storage network within VMware does not get a VLAN ID, while the other networks do; even through the storage network has a VLAN ID of 5 within the UniFi OS.

Once I did that, the storage for the VMs was able to talk perfectly and all the VM Subnets worked as expected.

Denny