Statistics are magical little objects within the database engine that have the ability to make your queries run fast or painfully slow. The reason that statistics are so important is because they tell the database engine what data exists within the database table and how much data exists. The problem with statistics comes from how often they are updated. By default in all versions and editions of SQL Server the statistics are updated when 20%+500 rows within the database table change. So if a database table has 10000 rows in it we need to change 2500 rows (2000 rows is 20% plus an additional 500 rows) for the statistics to be updated. With smaller tables like this having out of date statistics usually doesn’t cause to many problems. The problems really come into play with larger tables. For example if there are 50,000,000 rows in a table for the statistics to be automatically updated we would need to change 10,000,500 rows. Odds are it is going to take quite a while to change this number of rows. To fix this we can manually tell the SQL Server to  update the statistics by using the UPDATE STATISTICS command.

update the statistics by using the UPDATE STATISTICS command.

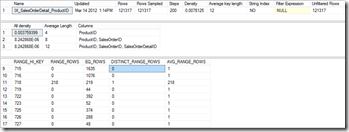

Within the statistic there are up to 200 values which are sampled from the column. The statistic shown below contains a few different columns. The statistic shows a series of values from the column which the statistic is built on. It also contains the count of the number of rows between that row and the next in the statistic. From this information the SQL Server is able to build the execution plan which is used to access the data. When the data within the statistic is out of date the SQL Server doesn’t make the correct assumptions about how much data there is and what the best way to access that data is. When the statistic gets updated the SQL Server is able to make better assumptions so the execution plan becomes better so the SQL Server is able to get the data faster.

Denny